Request a Tool

Entropy Calculator: Free and Easy Online Tool

Entropy measures the molecular disorder in a large system. It helps understand how energy disperses in physical and chemical processes.

Input

Output

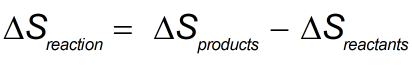

Entropy Formula

What is Entropy?

Entropy as a measure of the randomness of a system or amount of energy dispersed in a particular system. Of energy it points out how it is dispersed and changed in thermodynamics. It is important to differentiate between two terms, entropy for higher entropy is considered as disordered and for lower entropy it is ordered.

Entropy is central to the second law of thermodynamics, which states that the total entropy of an isolated system can never decrease over time; it can only stay the same or increase, signifying the irreversibility of natural processes.

What is an Entropy Calculator?

An Entropy Calculator is a tool used to calculate the entropy of a system, which is a measure of molecular disorder or randomness in thermodynamics. It helps determine the amount of energy in a system that is unavailable to perform work

In the above equation,S reaction reflects the total entropy change for the reaction and both Sproducts and S reactants refer to the entropy values of the products and reactants, respectively. This tool is very important in thermodynamics in determining how disorder changes in any given reaction.

Why Use the Entropy Calculator tool

Simplifies Complex Calculations

The Entropy Calculator tool automates the calculation process, making it easier to compute entropy, which can be complex when done manually, especially for systems with multiple states or variables.

Accurate Results

It helps provide precise calculations, minimizing the chance of errors that may occur during manual computations. This accuracy is crucial in fields like thermodynamics, statistical mechanics, and information theory.

Saves Time

Manually calculating entropy can be time-consuming, particularly for large datasets. The tool allows users to input values quickly and obtain results instantly, enhancing productivity.

Versatility

In many different branches of science such as chemistry, physics, and engineering to study the spontaneity of a reaction.

User-Friendly Interface

The tool is designed to be easy to use, often with a simple interface where you can enter values and get results without needing extensive background knowledge on entropy formulas.

Where Can the Entropy Calculator tool be used

Thermodynamics

In physics and engineering, the entropy calculator can be used to analyze thermodynamic processes, helping determine the entropy change in systems such as heat engines and refrigerators.

Statistical Mechanics

Researchers can utilize the tool to calculate the entropy of different statistical ensembles, aiding in the study of molecular behavior and energy distribution at the microscopic level.

Information Theory

In computer science, the entropy calculator is essential for quantifying the information content and uncertainty in data sets, which is crucial for data compression and transmission efficiency.

Machine Learning

In machine learning and data mining, the entropy calculator is used to evaluate the purity of datasets and optimize decision trees, enhancing classification models.

Education and Research

The tool serves as a valuable resource for students and researchers, allowing them to perform quick calculations for experiments, assignments, or theoretical studies related to entropy.

How to Use the Entropy Calculator tool

Using an Entropy Calculator tool is generally straightforward and involves the following steps:

Select Units

Select the type of energy expressed as Joules, Kilojoules or Calories in the dropdown list of both inputs.

Input Values

Fill in the corresponding values related to the coefficient and the initial and final stages of the system in the fields of the input form.

Calculate

Once all the necessary data is entered this calculator automatically will process the information and compute the entropy value.

View Results

The result will be the entropy change value, or the level of system disorder or randomness that you can examine in the output.

Clear Button

This button that allows you to clear the input fields and start a new calculation. This button is helpful when you need to perform multiple time calculations or make changes to the input values.

Conclusion

Thus, the Entropy Calculator can be useful to anybody interested in thermodynamics and chemical reactions. It becomes easier to compute entropy changes which were complex before. In this way, giving precise results as soon as possible improves comprehension and the decision-making process in several branches of science.

Whether you are a student or a worker, using this calculator can save a lot of your time as well as understanding the role of entropy in various natural processes.For quick calculation use our weetools. No sign-up, registration OR captcha is required to use this tool.